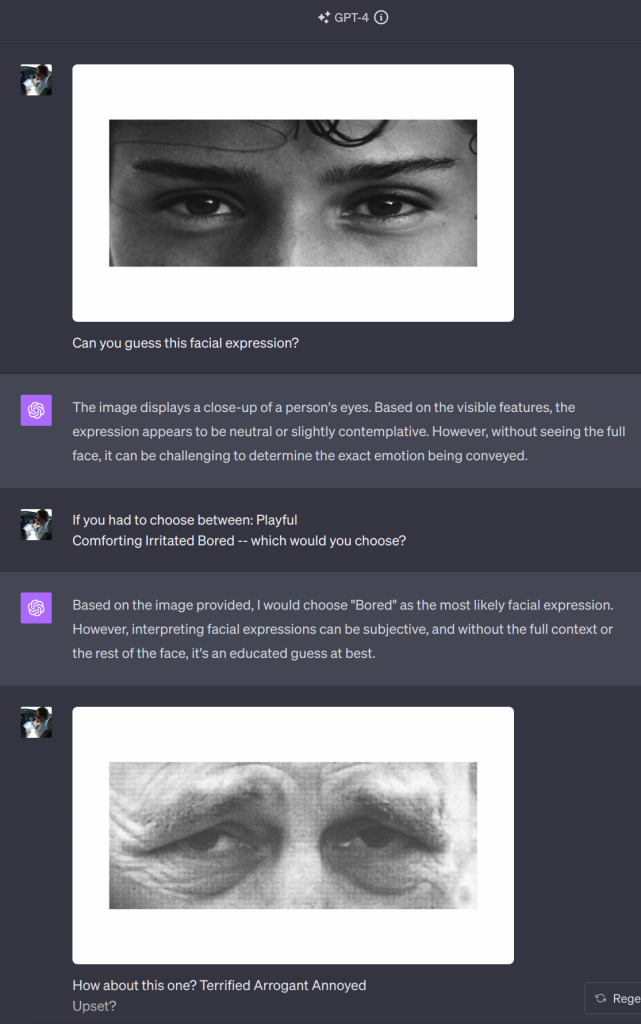

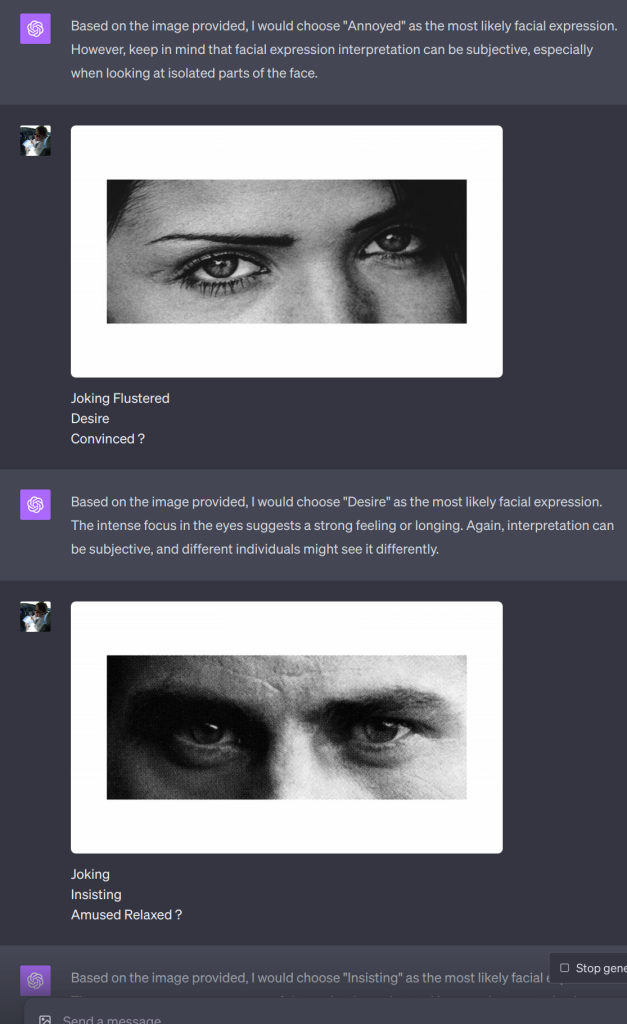

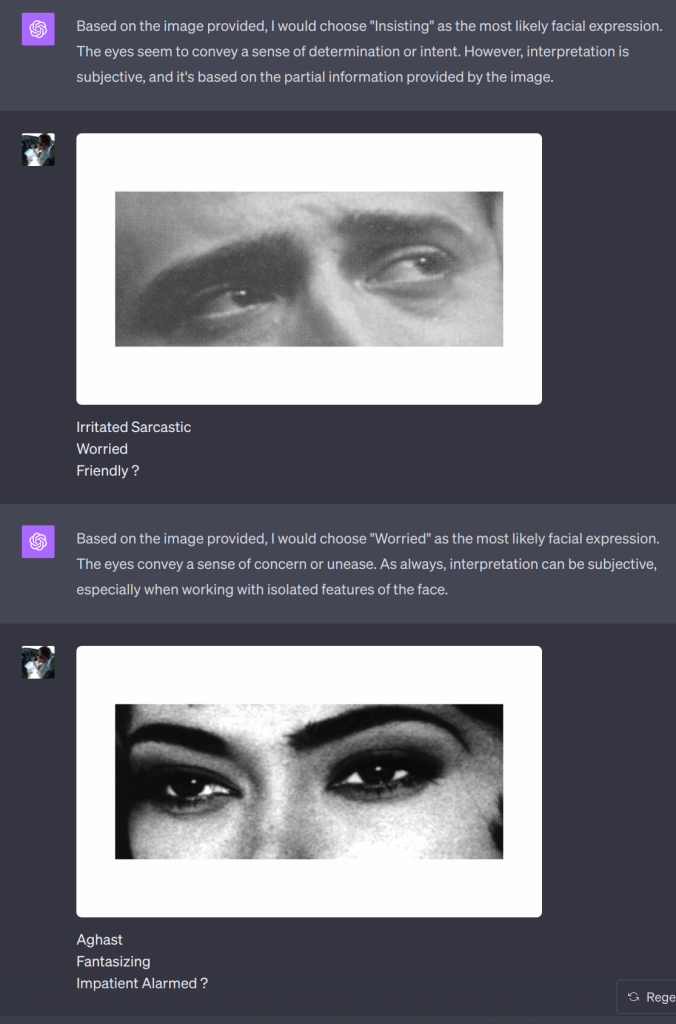

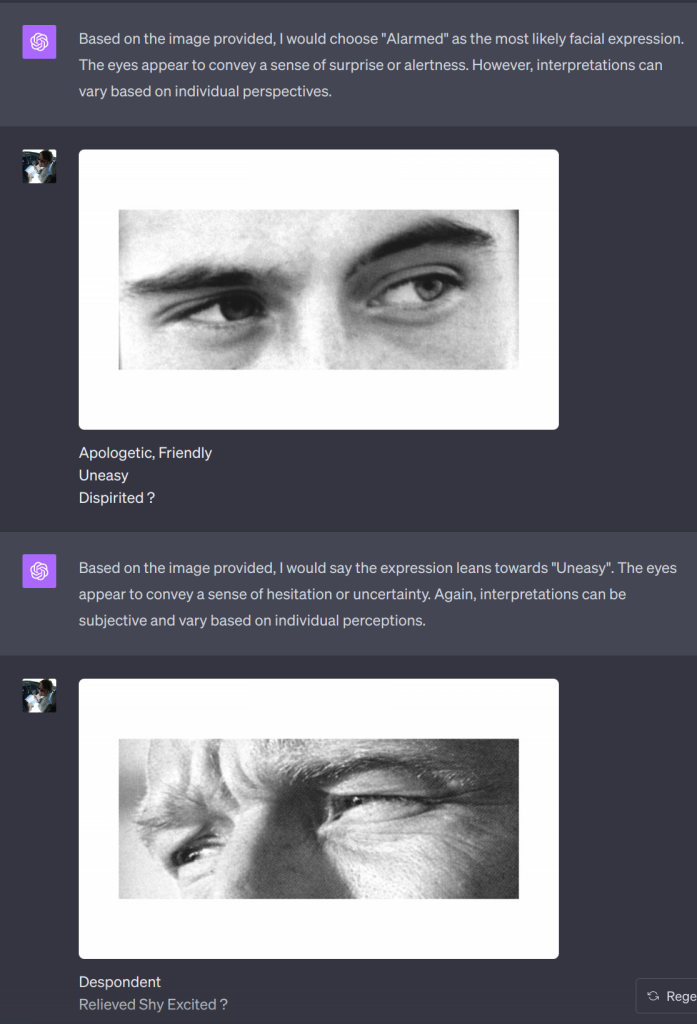

Now that ChatGPT does image processing, I wondered how it would do on the Reading the Mind in the Eyes Test. I’m pretty terrible at this test. Even for the ones that I get right, it feels like a random guess. I wish I’d recorded my score the first time I took it, but I can’t seem to find it. Anyway, I was curious how ChatGPT would do. Or if I could even convince it to take the test. It turns out convincing it was pretty easy:

ChatGPT scored a 25/36. The guidance provided with the test is:

The average score for this test is in the range of 22 to 30 correct responses. If you scored above 30, you may be quite good at understanding someone’s mental state based on facial cues. If you scored below 22, you may find it difficult to understand a person’s mental state based on their facial expressions.

I took it again after giving it to ChatGPT. There was a couple of hours in between most of it since I hit the ChatGPT limit and had to wait for it to reset. The test tells you what the right answer is after you choose, so I’m sure that helped. I scored a 20, which I think is significantly better than I did the first time took it.

I think we’re not far away from autism aids where your augmented reality goggles can tell you the emotion on the face of the person you’re speaking to is obviously, “Bored, just stop talking to him!” And then I can stop terrorizing whoever I’m talking to with my airplane stories without them having to be rude.

There’s a lot of focus on how generative AI is destroying humanity–I’m a lot more interested in the ways it’s going to help us be more human.

Leave a Reply